A look inside our ISyE April Seminar

(17-04-2026) Check it out! Another month, another inspiring ISyE seminar. This month, we put the spotlight on our researchers Andrea Mencaroni and Carlos Miguel Palao.

ISyE's April Seminar

For April, we put the spotlight on Andrea Mencaroni, presenting his research on “When does learning help? On the generalization of deep reinforcement learning for dynamic algorithm configuration in carbon-aware scheduling”, and Carlos Miguel Palao, sharing his work on “Workload balancing in mixed-model assembly lines through worker assignation learning and training”.Read the abstracts of their research below.

Want to get involved on this topic? Want to join the discussion on the socials? You can! Check out the LinkedIn post.

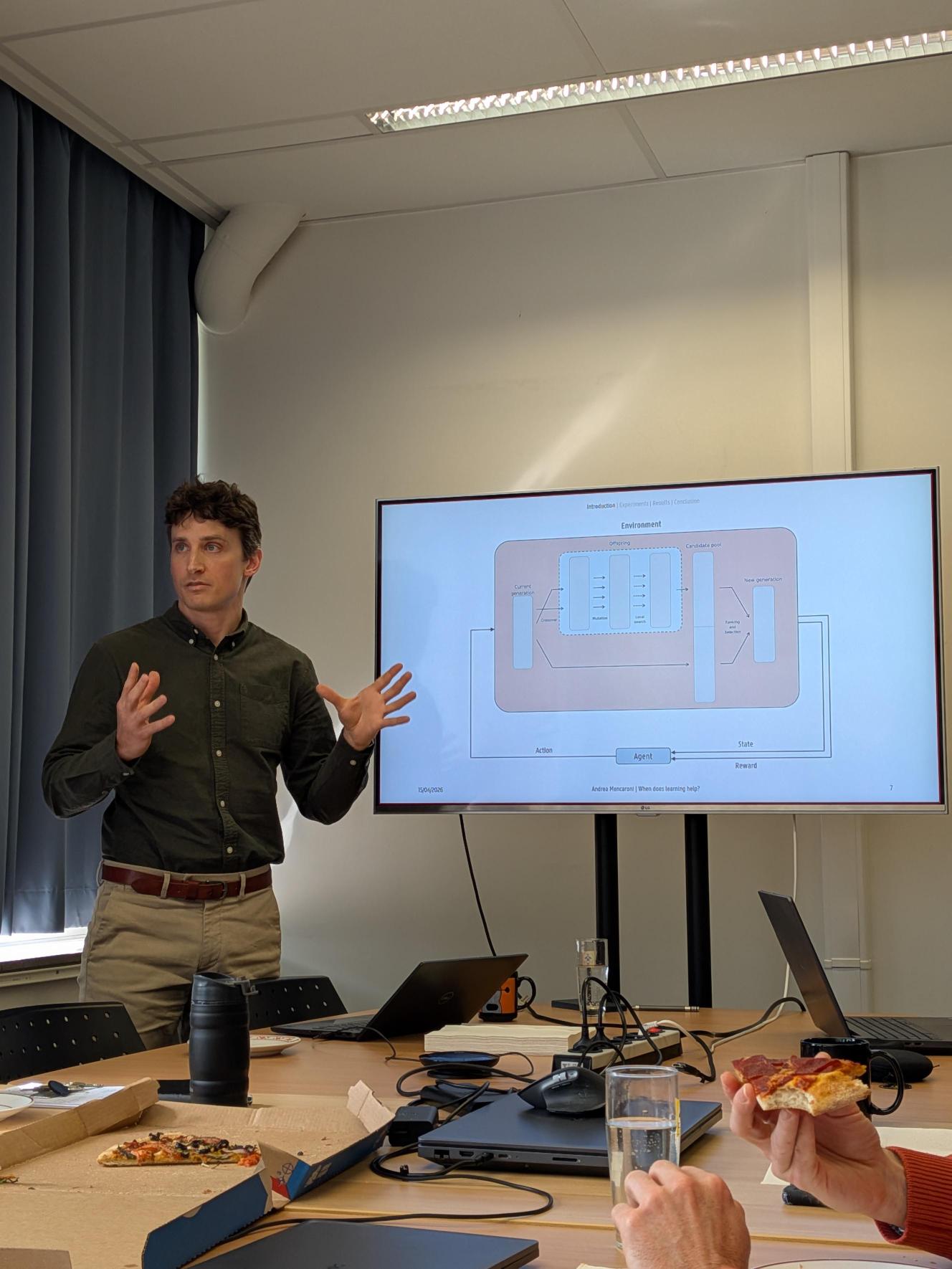

The generalization of deep reinforcement learning for dynamic algorithm configuration in carbon-aware scheduling

Deep reinforcement learning (DRL) has emerged as a promising tool for Dynamic Algorithm Configuration (DAC), enabling evolutionary algorithms to adapt their parameters online instead of relying on static tuned settings. Although DRL can learn effective control policies, training is computationally expensive. This cost is justifiable only if learned policies generalize, allowing a single training effort to transfer across instance types and problem scales. Yet, for real-world combinatorial optimization problems, it remains unclear whether this promise holds in practice and under which conditions the investment in learning pays off.In this work, we investigate this question in the context of the carbon-aware permutation flow-shop scheduling problem. We develop a DRL-based DAC framework and train it exclusively on small, simple instances. We then deploy the learned policy on both similar and substantially more complex unseen instances. As a fair comparison, we benchmark it against a statically tuned baseline optimized under the same budget and on the same training instances. Our results show that the learned DRL policy transfers effectively to new problem instances. On simple instances similar to those used in training, DRL performs on par with the statically tuned baseline. However, as instance characteristics diverge and computational complexities increase, the DRL-controlled algorithm consistently outperforms static tuning. These findings indicate that DRL can learn robust, generalizable control policies which are effective beyond the training instance distributions. This ability to generalize across instance types makes the initial computational investment worthwhile, particularly in settings where static tuning struggles to adapt to changing problem scenarios.

Workload balancing in mixed-model assembly lines through worker assignation learning and training

Mixed-model assembly lines (MMALs) allow manufacturers to produce multiple product variants on a single line, but the resulting task variety amplifies the role of human operators and the impact of their individual competence on line performance. Assigning workers to unfamiliar workstations can shift bottlenecks and degrade cycle times, while over-specialisation limits workforce resilience. This tension between short-term efficiency and long-term competence development remains underexplored in assembly line balancing literature.This work features a mixed-integer linear programming (MILP) model that jointly optimises worker assignment, training planning, and workload balancing in MMALs across a multi-shift horizon. Individual learning is modelled through a discretised formulation of De Jong's learning curve, which is piecewise-linearised to preserve model tractability while capturing task-level competence evolution. A weighted objective balances cycle time minimisation with a worker bottleneck measure that incentivises cross-training. To address the computational complexity arising from the learning curve discretisation, a greedy heuristic with local search is proposed to generate high-quality initial solutions for the exact solver.

The model is evaluated through a case study featuring two production ramp-up scenarios — the introduction of new product models and the onboarding of new workers — under static and dynamic objective function weight profiles. Results demonstrate the trade-off between assembly performance and workforce resilience and highlight how dynamically adjusting assignment priorities over the planning horizon can mitigate the impact of workforce disruptions.